use Claude Code?

Claude Code and Codex are brilliant engines — but handed straight to a business user, two things get in the way.

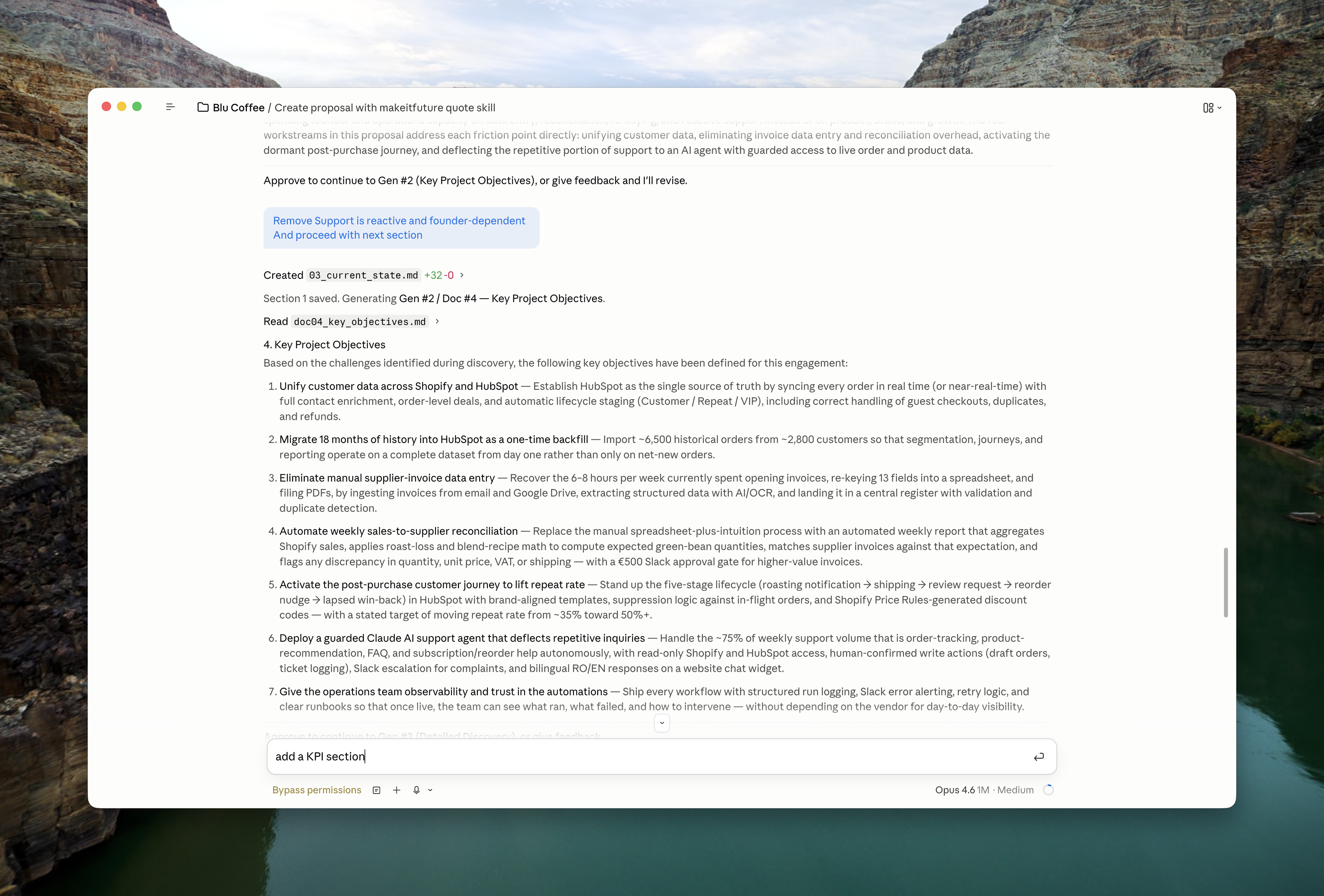

A chat window isn't a business tool

Real work needs forms, dashboards, review flows, audit trails, and multi-user access — not a blinking prompt. Most processes can't be done in a terminal. The AI is ready; the shell it lives in is holding it back.

It doesn't learn from your team

Every session starts fresh. Corrections you made yesterday are gone today. The AI repeats the same mistakes, and there's no natural place for your company's knowledge to accumulate between runs.